The Simulacrum is Insufficient

I’ve been thinking a lot about our collective inability to faithfully simulate human interaction. Invariably, in any modern debate about technology replacing the effort of humans, someone on the pro technology side will always expound on the cyclical nature of these arguments. Was it right for NASA to replace computers (mostly women who completed mathematical calculations) with computers (a programmable machine that processes data)? Was it acceptable to replace teams of men and oxen with the steam shovel? How far back can we go asking the question “is it okay to let technology take the place of humans?”

That’s not a question I’m going to be able to answer in this post. There probably is no ultimately satisfactory answer to that question. I’m not even interested in answering that question. In my albeit rudimentary carpentry work, I often use tools that allow me to complete the work faster and more efficiently than I could do without them. In fact, some of the time, the work can’t be done at all without said tools. Though it’s a fascinating area of philosophy, this post is not about tools. This is a post about human interaction.

The simulacrum is insufficient, because it is only simulacrum. This is true regardless of the fidelity of the simulacrum. Even if I cannot tell the difference (passes the Turing test, etc.), the simulacrum is not desirable. Regardless of whether or not I correctly perceive the simulacrum (do I detect if it is real?), it is either real or it is not. My perception doesn’t matter. Why? Because no machine, regardless of fidelity, should replace desired interactions with humans. Ever.

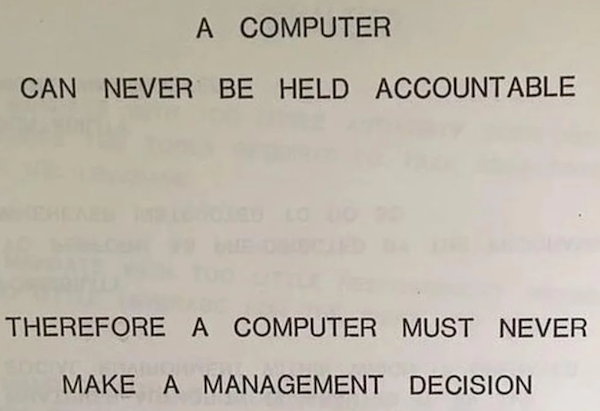

But why is that? Because the simulacrum can never be responsible and cannot be held accountable.

A page from a 1979 IBM manual that frankly does not go far enough

When humans interact with each other, when we are at our best, we are agreeing to a mutually beneficial social contract. Ostensibly (and in the case of legal matters, explicitly), we’re saying the following:

“The parties in this interaction are making choices and those choices have consequences. I am accountable for what I say and do during this interaction”

Most people often forget this. Interaction is so commonplace that we take these rules for granted. And, as with all things that are taken for granted, our observance of the rules falters. I think this is why people accept interactions with “AI” (genAI, LLM, or whatever term you want to use) agents. Interaction, so commonplace, is incorrectly perceived as unimportant (perhaps cheap), so the identity (the humanity) of the other party we’re interacting with becomes unimportant.

However, in a fair society, our responsibilities in an interaction and the outcome of those same interactions are inextricably linked. But when the interaction includes a “participant” who owns no accountability, the interaction is false, even if they are acting on behalf of their creators. Some will point out that a human customer service agent also acts on behalf of its employer, and that is no different than an AI agent acting on behalf of its creators. This is false because a human customer service agent lives and breaths and feels. Those feelings, that connection to other humans, are something that an AI agent can never possess. More importantly, the creators of AI agents, despite their evangelical protestations, don’t want an AI agent to truly connect with humans. Current capitalist ideology tells us that empathy and sympathy are an enemy to the bottom line. Just as the employer of a human customer service agent wants to extract themselves from their accountability to the customer, so does the creator (and buyer) of the AI agent.

The dangers of replacing genuine human interaction with simulacra are well known. There are numerous examples of people descending into madness and even suicide. But these are extreme examples. They are very important, and, just as we must not take the rules of interaction for granted, and let them slip away, we must not forget the everyday examples. Across this planet, billions of people ask simulacra to answer questions, and the simulacra are very, very bad at answering those questions. Admittedly, that’s a subjective statement; but I stick by it wholeheartedly. Far more intriguingly though, humans turning to external sources for answers and getting poor results is not a new phenomenon. But, what is new, is our turning to external sources for human interaction. That distinction is crucial. It’s one thing to believe an encyclopedia that contains a misprint, or a Wikipedia article without checking the source material. It’s entirely another thing to attempt to replace any human interaction with anything less than that. Naturally, the importance of this interaction remaining human is proportional to the importance of the interaction. For example, it’s more important for me to seek out a human therapist instead of an AI one than it is for me to insist a chatbot forward me to a human. But both of those interactions should exclusively be between humans, because then the interaction will have meant something to the two parties agreeing on the same social contract. Both parties are accountable. Any interaction with only one human will always be less than that, and insufficient.

Never trust a robot, even Bender. Okay, maybe Bender

These simulacra exist as another in a long line of futile attempts to separate the creator from the responsibility of creation and its impacts. It’s not an accident that the greatest evangelists and technocrats who promote these technologies seem to have a poor grasp of human interaction. Why does Mark Zuckerberg want so badly for the Metaverse to be successful? Could it be that he sees the redemption of his social deficiencies in this technology? Elon Musk’s perverted ideas of what society is and ought to be are essentially a mirror on what Grok has been programmed to provide. Why are we letting these fucking weirdos destroy human interaction?

This is the true problem and reckoning for our relationship with technology, and it always will be. This must be at the core of humanity's rejection of anything that seeks to simulate the human mind or simulate interaction. It should be illegal to replace a participant in any human interaction with simulacra without informing the human party(ies). Following that, computers should be allowed to calculate but not to think; at least not think like a human does.

I’m not going to pretend that we, as a society, don’t have problems with human interaction that desperately require satisfactory resolution. Of course we do. The answer to this is not a series of tools designed to simulate human interaction, but rather a renewed commitment to better human interaction. This is also a renewed commitment to each other, and a renewed commitment to a mutually beneficial social contract; a universally beneficial social contract.